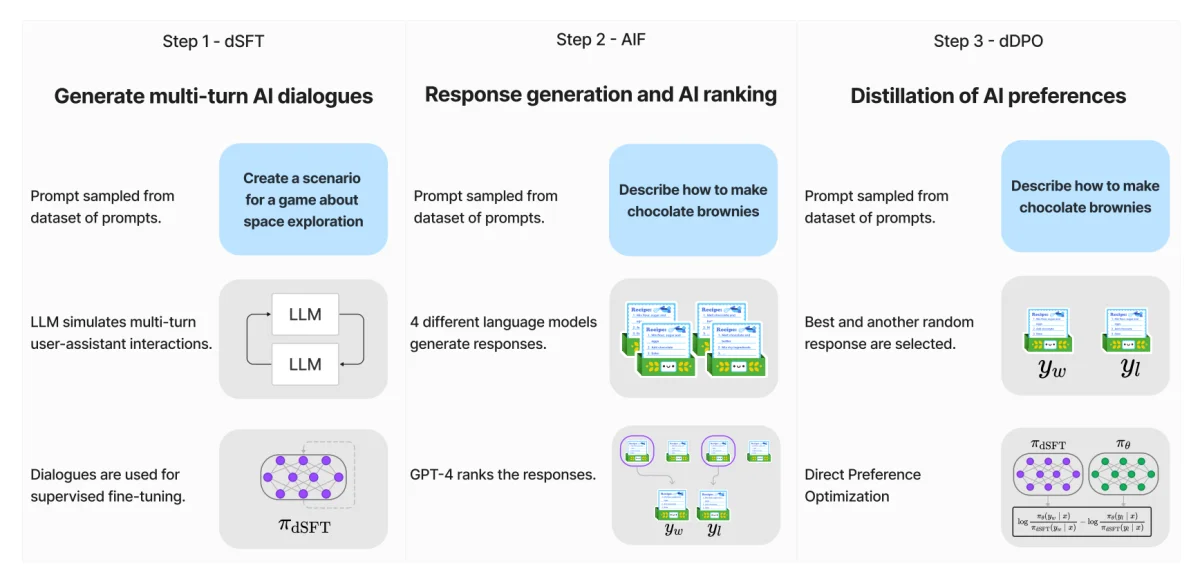

Supervised fine-tuning (SFT) is a method used in machine learning to improve the performance of a pre-trained model. The model is initially trained on a large dataset, then fine-tuned on a smaller, specific dataset. This allows the model to maintain the general knowledge learned from the large dataset while adapting to the specific characteristics of the smaller dataset.

Fine-Tune XLSR-Wav2Vec2 for low-resource ASR with 🤗 Transformers

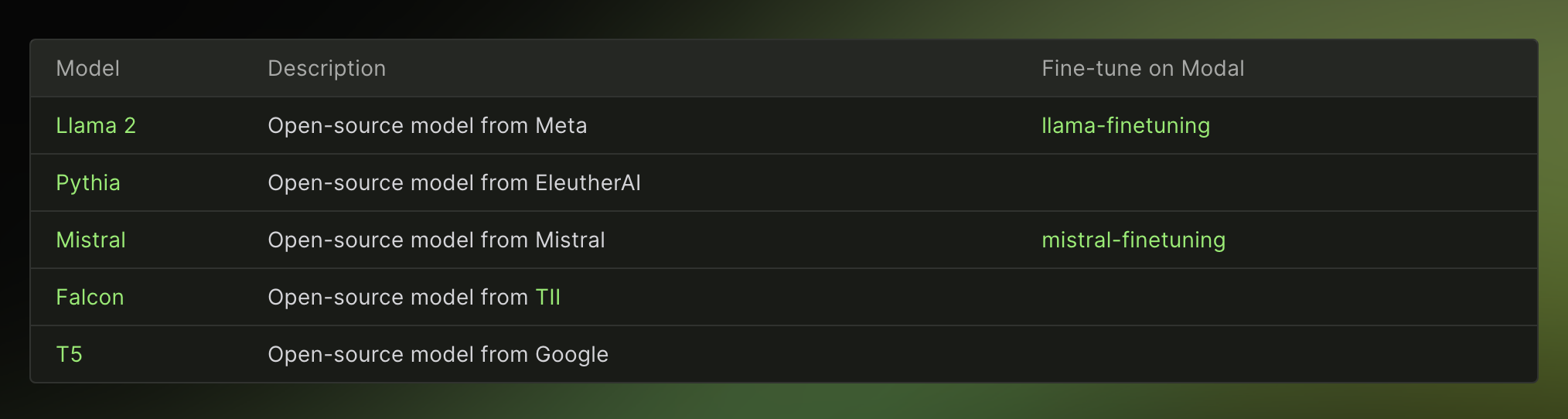

Fine-Tuning LLMs ( Large Language Models )

Understanding and Using Supervised Fine-Tuning (SFT) for Language

Fine-Tuning LLMs Simplified: Beginning from the Basics (Part 1)

Fine-tuning Large Language Models series: Internal mechanism of

Exploring Large Language Models -Part 3, by Alex Punnen

What is Zephyr 7B? — Klu

Best Open Source LLMs of 2024 — Klu

Deep Learning for Instance Retrieval: A Survey

CoFRIDA: Self-Supervised Fine-Tuning for Human-Robot Co-Painting

JSAN, Free Full-Text

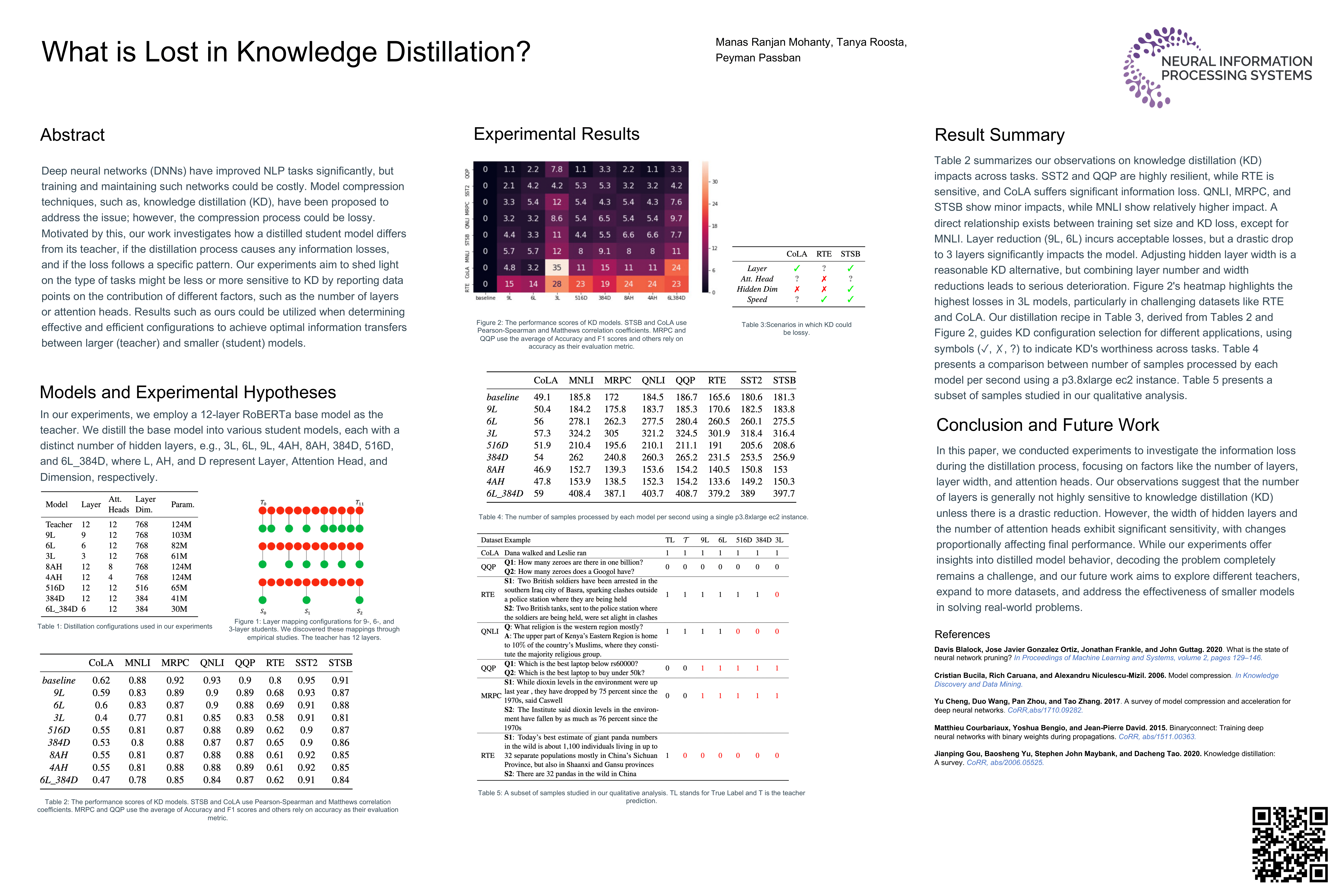

ENLSP NeurIPS Workshop 2023 ENLSP highlights some fundamental